|

How to apply this pooling correctly, have a look at sentence-transformers/bert-base-nli-max-tokens and /sentence-transformers/bert-base-nli-cls-token. We also have models with max-pooling and where we use the CLS token. In the above example we add mean pooling on top of the AutoModel (which will load a BERT model). In this case, mean pooling sentence_embeddings = mean_pooling ( model_output, encoded_input ) no_grad (): model_output = model ( ** encoded_input ) #Perform pooling. Read the full review: Nvidia GeForce RTX 2070 (Image credit: Future) 2. from_pretrained ( "sentence-transformers/all-MiniLM-L6-v2" ) #Tokenize sentences encoded_input = tokenizer ( sentences, padding = True, truncation = True, max_length = 128, return_tensors = 'pt' ) #Compute token embeddings with torch. Just like picking out any other gear, finding the best mining GPU can be tough. from_pretrained ( "sentence-transformers/all-MiniLM-L6-v2" ) model = AutoModel. sum ( 1 ), min = 1e-9 ) return sum_embeddings / sum_mask #Sentences we want sentence embeddings for sentences = #Load AutoModel from huggingface model repository tokenizer = AutoTokenizer. sum ( token_embeddings * input_mask_expanded, 1 ) sum_mask = torch. If convert_to_numpy, a numpy matrix is returned.įrom transformers import AutoTokenizer, AutoModel import torch #Mean Pooling - Take attention mask into account for correct averaging def mean_pooling ( model_output, attention_mask ): token_embeddings = model_output #First element of model_output contains all token embeddings input_mask_expanded = attention_mask. If convert_to_tensor, a stacked tensor is returned. In that case, the faster dot-product (util.dot_score) instead of cosine similarity can be used.īy default, a list of tensors is returned. Normalize_embeddings – If set to true, returned vectors will have length 1. Overwrites any setting from convert_to_numpyĭevice – Which vice to use for the computation Else, it is a list of pytorch tensors.Ĭonvert_to_tensor – If true, you get one large tensor as return. Set to None, to get all output valuesĬonvert_to_numpy – If true, the output is a list of numpy vectors.

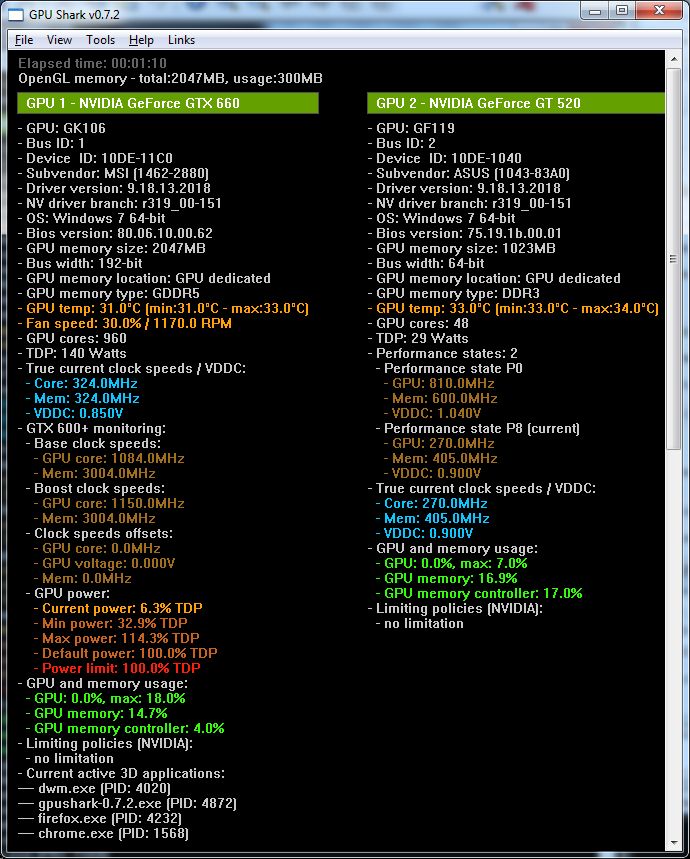

Can be set to token_embeddings to get wordpiece token embeddings. Output_value – Default sentence_embedding, to get sentence embeddings. Show_progress_bar – Output a progress bar when encode sentences encode ( sentences : Union ], batch_size : int = 32, show_progress_bar : Optional = None, output_value : str = 'sentence_embedding', convert_to_numpy : bool = True, convert_to_tensor : bool = False, device : Optional = None, normalize_embeddings : bool = False ) → Union, numpy.ndarray, torch.Tensor ] ¶īatch_size – the batch size used for the computation Initializes internal Module state, shared by both nn.Module and ScriptModule. Use_auth_token – HuggingFace authentication token to download private models. Modules – This parameter can be used to create custom SentenceTransformer models from scratch.ĭevice – Device (like ‘cuda’ / ‘cpu’) that should be used for computation. If that fails, tries to construct a model from Huggingface models repository with that name. If it is not a path, it first tries to download a pre-trained SentenceTransformer model. Model_name_or_path – If it is a filepath on disc, it loads the model from that path. Loads or create a SentenceTransformer model, that can be used to map sentences / text to embeddings. SentenceTransformer ( model_name_or_path : Optional = None, modules : Optional ] = None, device : Optional = None, cache_folder : Optional = None, use_auth_token : Optional ] = None ) ¶ Some relevant parameters are batch_size (depending on your GPU a different batch size is optimal) as well as convert_to_numpy (returns a numpy matrix) and convert_to_tensor (returns a pytorch tensor). In the following, you can find parameters this method accepts. The relevant method to encode a set of sentences / texts is model.encode(). With device any pytorch device (like CPU, cuda, cuda:0 etc.) added support of Intel Arc A570M, Arc A530M, Arc Pro A60M and A30M.Model = SentenceTransformer ( 'model_name_or_path', device = 'cuda' ) added support of NVIDIA GeForce RTX 4060 Ti 16GB added support of AMD Radeon RX 7900 GRE. added support of AMD Radeon PRO W7900, PRO W7800, fixed Radeon RX 6850M XT name (XT was missing). This featureĬan be changed via gpu_monitoring_separate_thread in a. GPU monitoring update is now done in a separate thread. Horizontal splitting size when two or more GPUs are detected. added the energy consumption of GPUs in kWh.

fixed a crash on some systems with Radeon GPUs. GPU Shark 2 is powered by GeeXLab, the army knife of programming tools.

GPU Shark II is a GPU / graphics card information and monitoring utility for Windows and Linux. Building a Submersible PC for ULTIMATE cooling 4 16:51:30. Moore Threads MTT S80 GPU Review - A New Challenger Appears 4 16:53:34.

DESCRIPTION: GPU Shark II is the successor of the first GPU Shark. GPU Shark II is a GPU / graphics card information and monitoring utility for Windows and Linux.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed